The quantum threat

For years, the idea of quantum computers breaking modern encryption felt like a distant worry. That's changing, and quickly. The core of the issue is post-quantum cryptography (PQC) – developing cryptographic systems that can withstand attacks from both classical and quantum computers. It’s no longer a matter of if, but when, quantum computers will be powerful enough to compromise our current security infrastructure.

Today’s most common encryption methods, like RSA and Elliptic Curve Cryptography (ECC), rely on mathematical problems that are incredibly difficult for classical computers to solve. However, a quantum algorithm called Shor’s algorithm can efficiently break these systems. This isn't some theoretical possibility; progress in quantum computing is accelerating, and the potential impact on cybersecurity is enormous.

The year 2026 is often cited as a critical milestone. The National Security Agency (NSA) has warned that quantum computers could be capable of decrypting currently encrypted data by 2030, meaning data stolen today could be compromised within the next decade. This timeframe is driving the urgent need to transition to PQC. It’s not about preventing a future attack; it’s about protecting data that needs to remain confidential for years to come. This isn't science fiction; it's a very real, and rapidly approaching, problem for anyone concerned with data security.

NIST standardization

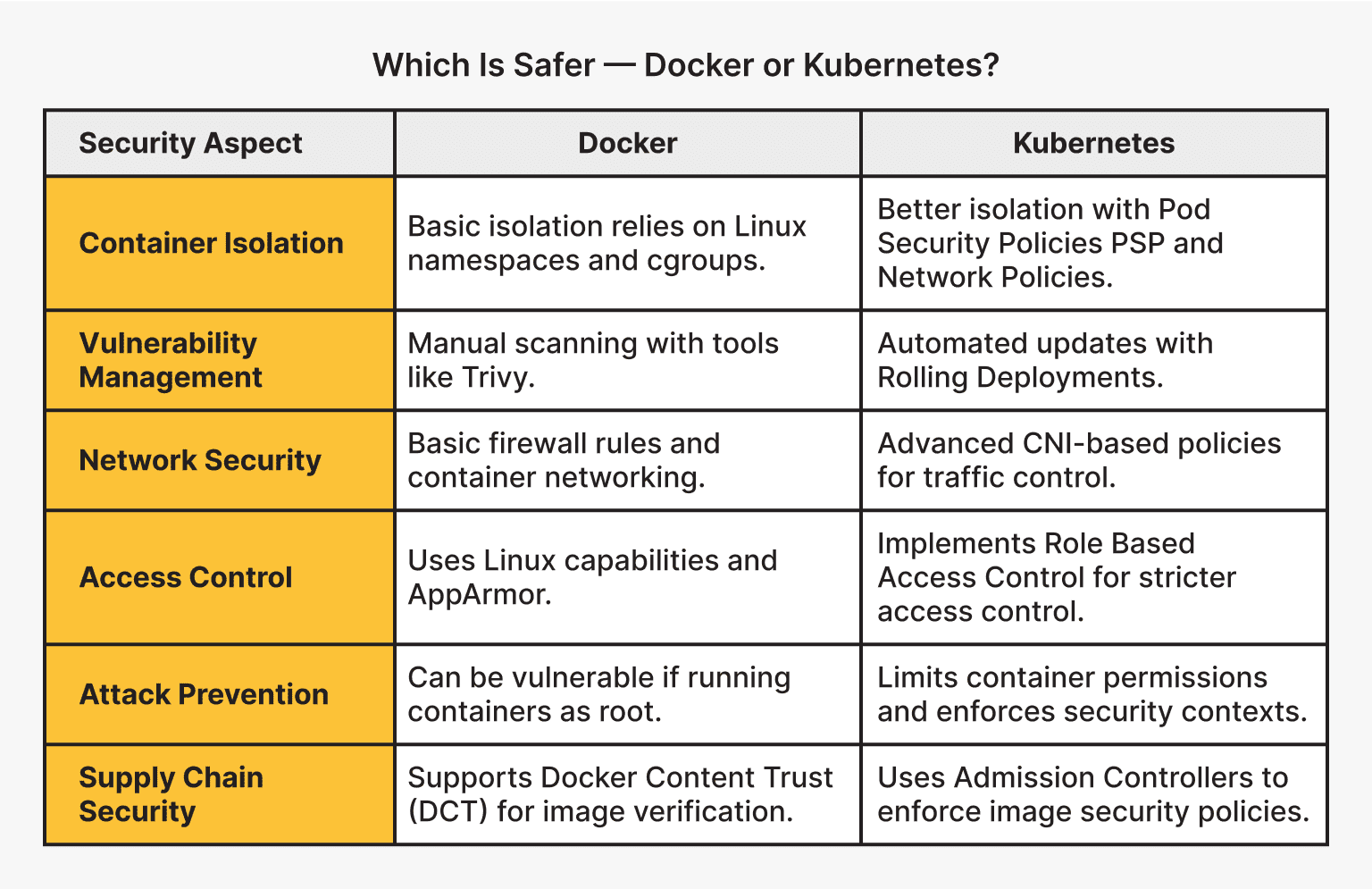

Recognizing the threat, the National Institute of Standards and Technology (NIST) launched a PQC standardization process in 2016. This ambitious project aims to identify and standardize a new generation of cryptographic algorithms resilient to quantum attacks. The process involved multiple rounds of evaluation, with submissions from researchers around the globe.

NIST categorized the proposed algorithms into five main families: lattice-based, code-based, multivariate, hash-based, and isogeny-based. Each approach relies on different mathematical problems believed to be hard for quantum computers to solve. In July 2022, NIST announced the first set of algorithms selected for standardization: CRYSTALS-Kyber for key encapsulation and CRYSTALS-Dilithium, FALCON, and SPHINCS+ for digital signatures.

NIST hasn't finished the process. While the initial selections are a start, the agency is still evaluating candidates and refining standards. Evaluation rounds are active as of late 2024, so final standards might change from these early picks.

- CRYSTALS-Kyber: Key Encapsulation Mechanism (KEM)

- CRYSTALS-Dilithium: Digital Signature Algorithm

- FALCON: Digital Signature Algorithm

- SPHINCS+: Digital Signature Algorithm

NIST Post-Quantum Cryptography Standardization Finalists and Alternates

| Algorithm Name | Category | Key Size (approximate) | Strengths | Weaknesses |

|---|---|---|---|---|

| CRYSTALS-Kyber | Key-Encapsulation Mechanism (KEM) | 768 bytes (Kyber768) | Generally fast, relatively small key and ciphertext sizes, strong security assumptions. | Performance can vary depending on implementation; potential concerns regarding algebraic structure. |

| CRYSTALS-Dilithium | Digital Signature | 2528 bytes (Dilithium2) | Good performance, relatively small signature size, strong security assumptions. | Larger signature size compared to some classical algorithms. |

| Falcon | Digital Signature | 690 bytes (Falcon-512) | Smallest signature size among the finalists, relatively fast verification. | More complex implementation, potential concerns around side-channel attacks. |

| SPHINCS+ | Digital Signature | Variable, typically several KB | Stateless signature scheme, strong security based on hash function security, resistant to side-channel attacks. | Signatures are significantly larger than other schemes; slower signing speed. |

| BIKE | Key-Encapsulation Mechanism (KEM) | 672 bytes (BIKE192) | Based on well-studied code-based cryptography. | Larger key sizes compared to lattice-based KEMs; performance can be a concern. |

| HQC | Key-Encapsulation Mechanism (KEM) | Variable, dependent on parameters | Relatively simple structure, potential for efficient implementation. | Security relies on the hardness of decoding problems; parameter selection is crucial. |

| Classic McEliece | Key-Encapsulation Mechanism (KEM) | 264 KB (McEliece8861) | Long history of study, strong security based on well-understood mathematical problems. | Extremely large key size, making it impractical for many applications. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Lattice-based cryptography

Lattice-based cryptography has emerged as a leading contender in the NIST PQC standardization process. At its heart, it relies on the difficulty of solving certain mathematical problems involving high-dimensional lattices – think of these as regularly spaced points in a multi-dimensional space. Finding the "shortest vector" in such a lattice is computationally hard, even for quantum computers.

The appeal of lattice-based schemes lies in their strong security assumptions and relatively efficient performance. They offer a good balance between security and practicality, making them suitable for a wide range of applications. They also benefit from a relatively well-understood mathematical foundation, allowing for thorough security analysis.

However, lattice-based cryptography isn’t without its challenges. Parameter selection is crucial; poorly chosen parameters can weaken the security of the scheme. There are also concerns about potential side-channel attacks, where attackers exploit implementation details to gain information about the secret key. While these challenges are being actively addressed, they require careful consideration during implementation.

Impact on TLS and SSH

Many of the secure communications we rely on daily – browsing the web, sending emails, accessing remote servers – are protected by protocols like TLS (Transport Layer Security) and SSH (Secure Shell). These protocols depend on the encryption algorithms we’ve discussed. Introducing PQC algorithms into these protocols is a complex undertaking.

The primary challenge is maintaining backward compatibility. A phased approach is necessary, and hybrid approaches – combining classical and post-quantum algorithms – are currently the most practical solution.

For example, a TLS connection might negotiate to use both an ECC key exchange and a PQC key exchange. This ensures that the connection remains secure even if one of the algorithms is compromised. While specific implementation details are still being worked out, the goal is to seamlessly integrate PQC without disrupting existing infrastructure. I’m not certain on the exact API changes required at this point, but the IETF is actively working on standards.

Preparing network infrastructure

Preparing for PQC isn’t a one-time fix; it’s a long-term strategic project. The first step is to assess your organization’s cryptographic posture. Identify where encryption is used – in transit, at rest, for digital signatures – and which algorithms are currently employed. This inventory will help you prioritize your PQC migration efforts.

Systems need cryptographic agility. You should be able to swap algorithms without rewriting the entire codebase. Avoid hardcoding specific math; use standardized interfaces and configuration files instead. This makes it easier to adapt as NIST finalizes its requirements. CISA provides a preparation guide for this transition.

Hardware Security Modules (HSMs) will also play a crucial role. These specialized hardware devices protect cryptographic keys and perform cryptographic operations. To support PQC, HSMs need to be upgraded to support the new algorithms. This is a significant investment, but it’s essential for securing sensitive data. This transition will take time and resources, but proactive preparation is key to mitigating the quantum threat.

Digital signatures

While much of the focus on PQC is on encryption, it’s equally important to consider the impact on digital signatures. Quantum computers also pose a threat to existing digital signature schemes, like RSA and ECDSA, using Shor's algorithm. This has implications for code signing, document verification, and other applications that rely on digital signatures.

PQC-based digital signature algorithms, such as CRYSTALS-Dilithium and FALCON (selected by NIST), are designed to resist attacks from both classical and quantum computers. These algorithms are based on different mathematical problems than traditional signature schemes, providing a new level of security.

Protecting digital signatures is crucial for ensuring the integrity and authenticity of software and data. Without secure digital signatures, attackers could potentially tamper with software updates or forge documents, leading to serious consequences. This aspect of PQC is often overlooked, but it is just as critical as securing encryption.

Resources and updates

The field of post-quantum cryptography is evolving rapidly. Staying informed about the latest developments is essential for organizations preparing for the quantum threat. Several valuable resources are available to help you stay up-to-date.

The NIST PQC website hosts the latest documentation on the standardization process. CISA also publishes a migration guide for federal agencies and private partners that outlines specific transition steps.

Industry blogs, academic research papers, and conferences are also excellent sources of information. Continuously learning and adapting to the changing landscape is vital for ensuring the long-term security of your systems. This isn’t a "set it and forget it" situation; it demands ongoing attention and investment.

No comments yet. Be the first to share your thoughts!