The looming quantum threat

For decades, we’ve relied on the mathematical difficulty of certain problems to keep our data secure. Things like online banking, secure communications, and even digital signatures depend on encryption algorithms that, currently, would take classical computers millennia to break. But the rise of quantum computing changes everything. These aren’t just faster versions of today’s computers; they operate on fundamentally different principles.

The core threat comes from algorithms like Shor’s algorithm, developed by mathematician Peter Shor in 1994. This algorithm can efficiently factor large numbers – the foundation of many widely used encryption methods like RSA – and solve the discrete logarithm problem, which underlies the security of Elliptic Curve Cryptography (ECC). If a sufficiently powerful quantum computer were built, it could render these algorithms useless, exposing vast amounts of sensitive data.

The timeline for this threat is often framed around 2026. This isn't a random date; it’s driven by the ongoing standardization efforts of the National Institute of Standards and Technology (NIST). They are currently working to identify and standardize new cryptographic algorithms resistant to attacks from both classical and quantum computers. Preparing now isn’t about reacting to an immediate crisis, but about proactively adapting to a future where current encryption is compromised. We need to build systems that can withstand this shift.

What current encryption stands to lose

RSA, ECC, and Diffie-Hellman are the workhorses of modern cryptography, but they’re all vulnerable to Shor’s algorithm. RSA, used extensively in digital signatures and key exchange, relies on the difficulty of factoring large numbers. ECC, favored for its efficiency, secures many web connections and cryptocurrency transactions. Diffie-Hellman, a key exchange protocol, is fundamental to establishing secure communications channels.

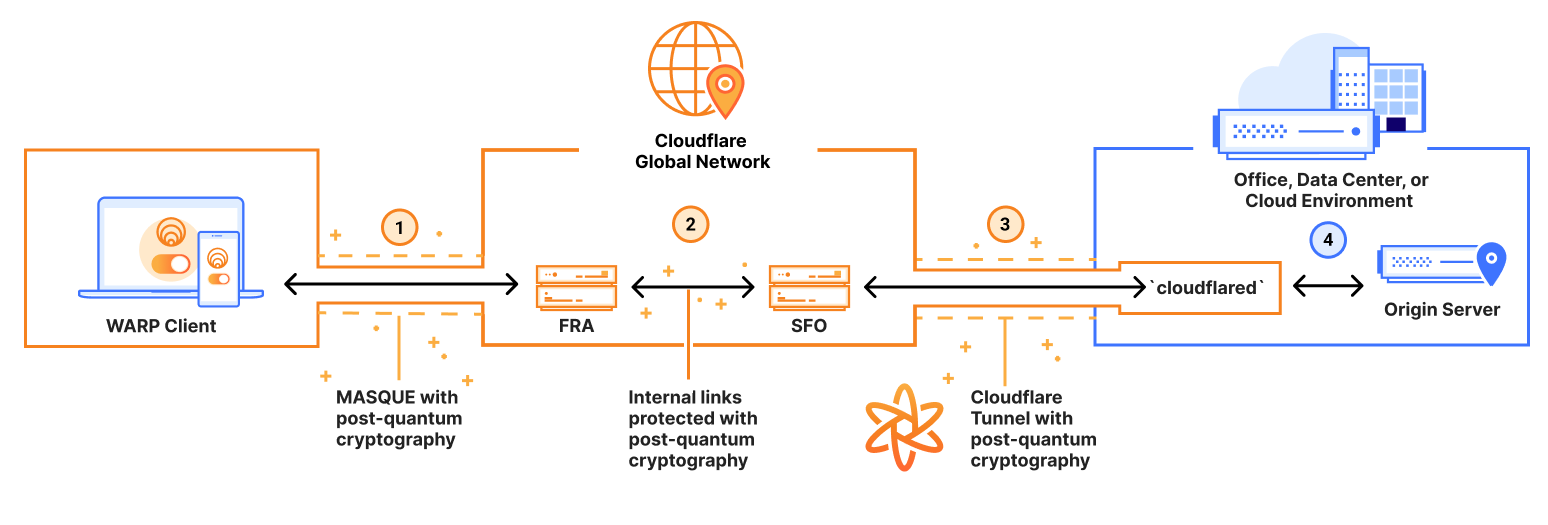

These algorithms aren’t just abstract concepts; they’re woven into the fabric of our digital lives. HTTPS, the protocol that secures websites, relies heavily on these algorithms to encrypt data transmitted between your browser and a server. Virtual Private Networks (VPNs) use them to create secure tunnels for your internet traffic. SSH, a protocol for secure remote access, depends on them to authenticate users and encrypt data. Even the digital signatures that verify the authenticity of software updates rely on these vulnerable systems.

The consequences of a successful quantum attack would be far-reaching. Financial transactions could be intercepted and manipulated, sensitive personal data exposed, and critical infrastructure compromised. It's not a hypothetical risk. Nation-states and malicious actors are actively investing in quantum computing research, and the threat of decryption of stored data – known as "store now, decrypt later" – is very real. Data encrypted today could be vulnerable years from now.

Post-quantum cryptography algorithms

Post-Quantum Cryptography (PQC) refers to cryptographic algorithms that are believed to be resistant to attacks from both classical and quantum computers. NIST is leading the charge in standardizing these new algorithms, and they fall into several main families. Lattice-based cryptography, for example, relies on the difficulty of solving certain problems involving lattices – geometrical structures. Code-based cryptography uses the difficulty of decoding general linear codes.

Multivariate cryptography leverages the complexity of solving systems of multivariate polynomial equations. Hash-based signatures rely on the security of cryptographic hash functions. Finally, isogeny-based cryptography exploits the properties of elliptic curves to create secure key exchange protocols. Each family has its own strengths and weaknesses, and NIST’s selection process aims to diversify our cryptographic toolkit.

The NIST PQC competition, launched in 2016, invited researchers worldwide to submit candidate algorithms. After multiple rounds of evaluation, NIST announced its first set of standardized algorithms in 2022. While the transition to PQC is still in its early stages, these selections represent a significant milestone. I'm not sure about the precise timeline for full deployment, but the goal is to have standardized algorithms readily available for implementation within the next few years.

NIST's specific recommendations

NIST initially selected four algorithms for standardization: CRYSTALS-Kyber, CRYSTALS-Dilithium, FALCON, and SPHINCS+. CRYSTALS-Kyber is a key encapsulation mechanism (KEM) – meaning it’s used to securely exchange encryption keys. It's designed to replace algorithms like RSA-KEM and ECC-KEM. CRYSTALS-Dilithium and FALCON are both digital signature algorithms, offering alternatives to RSA and ECDSA.

SPHINCS+ is a stateless hash-based signature scheme. Unlike the other algorithms, it doesn’t rely on complex mathematical structures, making it a good backup option. However, it has larger signature sizes, which can be a drawback in certain applications. According to the NSA, these algorithms represent a strong foundation for building quantum-resistant systems (nsa.gov).

These algorithms have trade-offs. Some require larger keys that slow down connections, while others demand more processing power. You'll have to choose based on whether you prioritize speed or file size. NIST is still looking at other options, so expect these recommendations to change as we get more data.

- CRYSTALS-Kyber: Key Encapsulation Mechanism (KEM)

- CRYSTALS-Dilithium: Digital Signature

- FALCON: Digital Signature

- SPHINCS+: Stateless Hash-Based Signature

NIST Post-Quantum Cryptography Standard Algorithms: A Comparative Overview

| Algorithm Name | Primary Use Case | Estimated Performance Impact | Known Vulnerabilities/Considerations |

|---|---|---|---|

| CRYSTALS-Kyber | Key Encapsulation Mechanism (KEM) | Lower | Relatively large key sizes compared to classical algorithms. Considered a strong, general-purpose KEM. |

| CRYSTALS-Dilithium | Digital Signature | Medium | Signature sizes are larger than many current signature schemes. Offers a good balance of security and performance. |

| Falcon | Digital Signature | Lower | Smaller signature sizes, making it suitable for bandwidth-constrained environments. More complex implementation may require careful optimization. |

| SPHINCS+ | Digital Signature | Higher | Stateless signature scheme offering high security. Significantly larger signature sizes and slower performance than other options. |

| BIKE | Key Encapsulation Mechanism (KEM) | Medium | Based on code-based cryptography, offering a different security foundation. Performance is generally slower than lattice-based KEMs like Kyber. |

| Classic McEliece | Key Encapsulation Mechanism (KEM) | Higher | Very large public key sizes, which can be a significant practical limitation. Offers a conservative security margin. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Finding your network's weak points

The first step in preparing for the quantum threat is to understand your organization’s exposure. This involves identifying critical data – information that needs to be protected for the long term – and mapping data flows to understand where that data is stored and how it’s transmitted. You need to create a comprehensive inventory of the cryptographic algorithms currently in use across your entire IT infrastructure.

This isn’t a one-time exercise. It’s an ongoing process that requires regular updates and monitoring. Consider which systems rely on vulnerable algorithms like RSA and ECC, and prioritize those for migration. Pay attention to data in transit – encrypted web traffic, VPN connections – and data at rest – stored databases, file servers. Focus on long-lived secrets, like encryption keys, that could be compromised and exploited years after they’re created.

Here’s a checklist to get you started: Are you using TLS 1.2 or earlier? Do you rely on RSA or ECC for digital signatures? What key lengths are you using? Where is sensitive data stored? What applications use cryptographic algorithms? What third-party services do you rely on, and what cryptographic algorithms do they use? Answering these questions will provide a clear picture of your organization’s vulnerability.

Migration strategies and tools

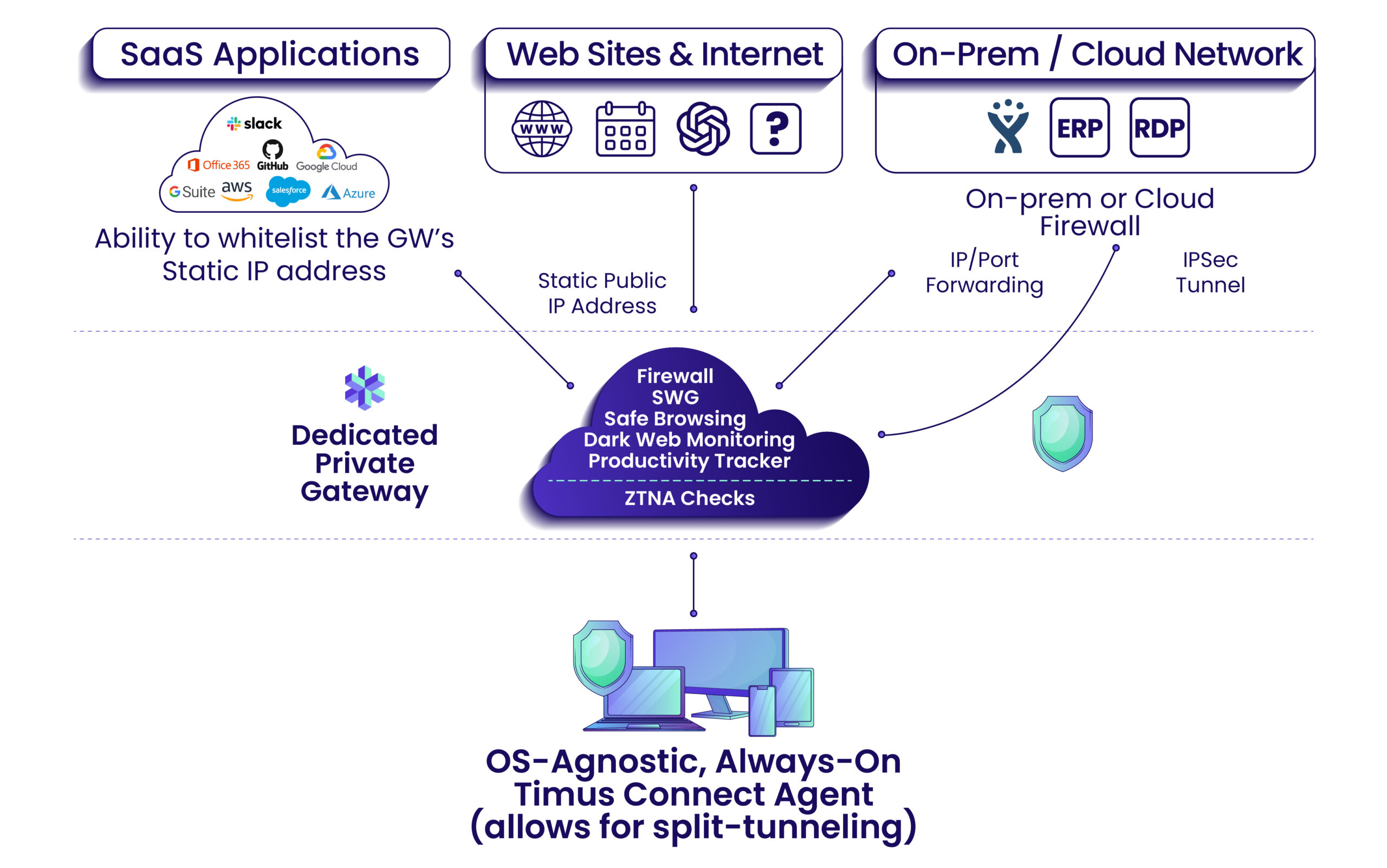

There are several approaches to migrating to PQC. Crypto agility is a key principle – designing systems that can easily swap cryptographic algorithms without requiring major code changes. This involves abstracting cryptographic operations and using standardized interfaces. A hybrid approach combines classical and PQC algorithms, providing a fallback option in case a PQC algorithm is found to be vulnerable.

Ultimately, the goal is full PQC deployment, where all vulnerable algorithms are replaced with quantum-resistant alternatives. This will be a complex and time-consuming undertaking, requiring careful planning and execution. Several tools and libraries can assist with the migration process, but the ecosystem is still evolving. OpenSSL, a widely used cryptography library, is adding support for PQC algorithms.

Be realistic about the challenges. Retrofitting existing systems can be difficult and expensive. Thorough testing is essential to ensure that PQC algorithms are implemented correctly and don’t introduce new vulnerabilities. Consider the performance implications of PQC algorithms, and optimize your systems accordingly. It’s a significant undertaking, but one that’s becoming increasingly necessary.

Steps to take through 2026

A phased approach is the most sensible way to tackle this transition. Phase 1 (2024) should focus on awareness and assessment. Educate your team about the quantum threat and conduct a thorough vulnerability assessment. Phase 2 (2025) should involve pilot projects and testing. Implement PQC algorithms in non-critical systems and evaluate their performance and security.

Phase 3 (2026 onwards) should be a gradual deployment and monitoring phase. Begin replacing vulnerable algorithms in critical systems, starting with those that protect the most sensitive data. Continuously monitor your systems for vulnerabilities and stay informed about the latest developments in PQC. The Swiss Cyber Institute provides a helpful guide for non-technical leaders (swisscyberinstitute.com).

Staying informed is paramount. The PQC landscape is rapidly evolving, and new algorithms and tools are constantly being developed. Prioritize continuous learning and adaptation. This isn’t a problem you solve once and forget about; it requires ongoing vigilance and proactive measures.

No comments yet. Be the first to share your thoughts!