The quantum threat

For decades, our digital security has relied on the mathematical difficulty of certain problems. Algorithms like RSA and ECC, which protect everything from online banking to government communications, are based on the idea that breaking them would take classical computers longer than the universe has existed. However, the emergence of quantum computing throws this assumption into question. Quantum computers, leveraging the principles of quantum mechanics, have the potential to solve these problems with incredible speed.

The core of this threat lies in Shor’s algorithm, a quantum algorithm developed by Peter Shor in 1994. This algorithm can efficiently factor large numbers – the foundation of RSA – and solve the discrete logarithm problem, which underpins ECC. If a sufficiently powerful quantum computer were built, it could break these widely used encryption methods, exposing sensitive data to malicious actors. The implications are far-reaching, potentially compromising financial transactions, intellectual property, and national security.

The year 2026 is becoming a focal point for cybersecurity professionals because of projected advancements in quantum computing. While fully functional, cryptographically relevant quantum computers aren’t here today, experts predict significant progress within the next few years. The timeframe isn't about an immediate, overnight collapse of security. It’s about the 'harvest now, decrypt later' attack model – where adversaries are collecting encrypted data today, anticipating the ability to decrypt it once quantum computers mature.

Quantum computers are currently expensive and error-prone. While they aren't ready to break encryption yet, IBM and Google are making steady progress. We need to prepare now because security updates take years to roll out across global infrastructure.

How post-quantum cryptography works

Post-quantum cryptography (PQC) refers to cryptographic algorithms that are believed to be secure against attacks by both classical and quantum computers. Unlike current public-key cryptography, PQC doesn't rely on the mathematical problems that Shor’s algorithm can solve. Instead, it's based on different mathematical structures that are thought to be resistant to quantum attacks. This is not a simple replacement of algorithms; it's a fundamental shift in how we approach data security.

The National Institute of Standards and Technology (NIST) has been leading a multi-year standardization process to identify and standardize PQC algorithms. In 2022 and 2023, NIST announced the first set of algorithms selected for standardization: CRYSTALS-Kyber for key encapsulation, CRYSTALS-Dilithium and FALCON for digital signatures, and SPHINCS+ as a backup signature scheme. These algorithms represent the best candidates for replacing vulnerable cryptographic systems.

PQC isn’t a single solution, but rather a collection of different approaches. Lattice-based cryptography, like CRYSTALS-Kyber and CRYSTALS-Dilithium, relies on the difficulty of solving problems involving lattices – geometric structures. Code-based cryptography utilizes error-correcting codes. Multivariate cryptography leverages the complexity of solving systems of multivariate polynomial equations. Hash-based cryptography, exemplified by SPHINCS+, relies on the security of cryptographic hash functions. Isogeny-based cryptography, while promising, is still under development and wasn’t part of the initial NIST selection.

Each approach has its own strengths and weaknesses. Lattice-based cryptography is currently favored for its performance and security. Hash-based cryptography is considered very conservative but can have larger signature sizes. The diversity of approaches is intentional. NIST’s goal is to provide a range of options to minimize the risk of a single vulnerability compromising all PQC systems. The standardization process is ongoing, and more algorithms may be added in the future.

- CRYSTALS-Kyber (key encapsulation)

- CRYSTALS-Dilithium: Digital signature algorithm

- FALCON: Digital signature algorithm

- SPHINCS+: Digital signature algorithm (backup)

Managing digital identities

Keyfactor positions itself as a leader in Digital Trust for the AI & Quantum Era. They recognize that ensuring trust in a world increasingly reliant on both artificial intelligence and facing the threat of quantum computing is paramount. Their offerings focus on managing the entire lifecycle of digital identities and cryptographic keys, which is becoming increasingly complex.

Keyfactor’s Certificate Lifecycle Automation aims to stop certificate outages and streamline the management of digital certificates. This is critical in the context of PQC, as organizations will need to manage the issuance, renewal, and revocation of new quantum-resistant certificates alongside their existing infrastructure. They also offer a Modern PKI Platform that allows organizations to establish trust and issue identities at scale.

The company emphasizes the need to automate the discovery of cryptographic assets, a crucial first step in preparing for the quantum threat. Understanding what systems are using vulnerable algorithms and where they are deployed is essential for prioritizing migration efforts. Keyfactor’s Cryptographic Discovery & Inventory tools help organizations gain visibility into their cryptographic posture. While specific pricing details aren't publicly available, the focus is on a comprehensive solution for managing digital trust in a rapidly evolving landscape.

Securing AI in the Quantum Era

The relationship between AI and quantum security is bidirectional. A presentation from IBM Technology (Securing AI for the Quantum Era: A CISOs Cyber Security Guide, January 29, 2026) highlights that AI can be a powerful tool for enhancing cybersecurity defenses against quantum attacks. AI-powered threat detection systems can analyze network traffic and identify anomalies that might indicate a quantum-based attack.

However, quantum computing also poses a threat to AI systems. Many AI algorithms rely on cryptographic techniques for secure data processing and model protection. If these cryptographic techniques are broken by a quantum computer, the integrity and confidentiality of AI systems could be compromised. This is particularly concerning for applications like federated learning, where sensitive data is shared between multiple parties.

The IBM presentation emphasized the importance of visibility and proactive security measures. Organizations need to understand their attack surface and identify potential vulnerabilities before they are exploited. This requires a comprehensive security strategy that encompasses both traditional and quantum-resistant security controls.

Furthermore, the demand for skilled professionals in quantum computation is growing. The presentation noted the availability of certifications for Quantum Computation using Qiskit v2.X, suggesting a need to build a workforce capable of developing and deploying quantum-safe security solutions. Investing in training and education is essential for organizations to effectively navigate the quantum era.

Practical Steps for Quantum Readiness

Preparing for the quantum threat isn’t a one-time project. It’s an ongoing process that requires a phased approach. The first step is to inventory your cryptographic assets. Identify all systems, applications, and data that rely on cryptography, and determine which algorithms they use. This is a significant undertaking, but it’s essential for understanding your exposure.

Once you have a clear inventory, prioritize systems for migration. Focus on the most critical systems and data first – those that would cause the greatest damage if compromised. Systems handling long-lived secrets, such as encryption keys for archival data, should be prioritized. Avoid a 'rip and replace' strategy; instead, adopt a hybrid approach.

Start testing PQC algorithms. NIST’s standardized algorithms are now available for testing and integration. Experiment with different PQC libraries and tools to understand their performance characteristics and compatibility with your existing infrastructure. This will help you identify potential challenges and refine your migration strategy.

Finally, develop a quantum risk management plan. This plan should outline your organization’s approach to mitigating the quantum threat, including timelines, responsibilities, and resource allocation. Regularly review and update this plan as the threat landscape evolves. It’s also important to stay informed about the latest developments in PQC and quantum computing.

Network Security Considerations

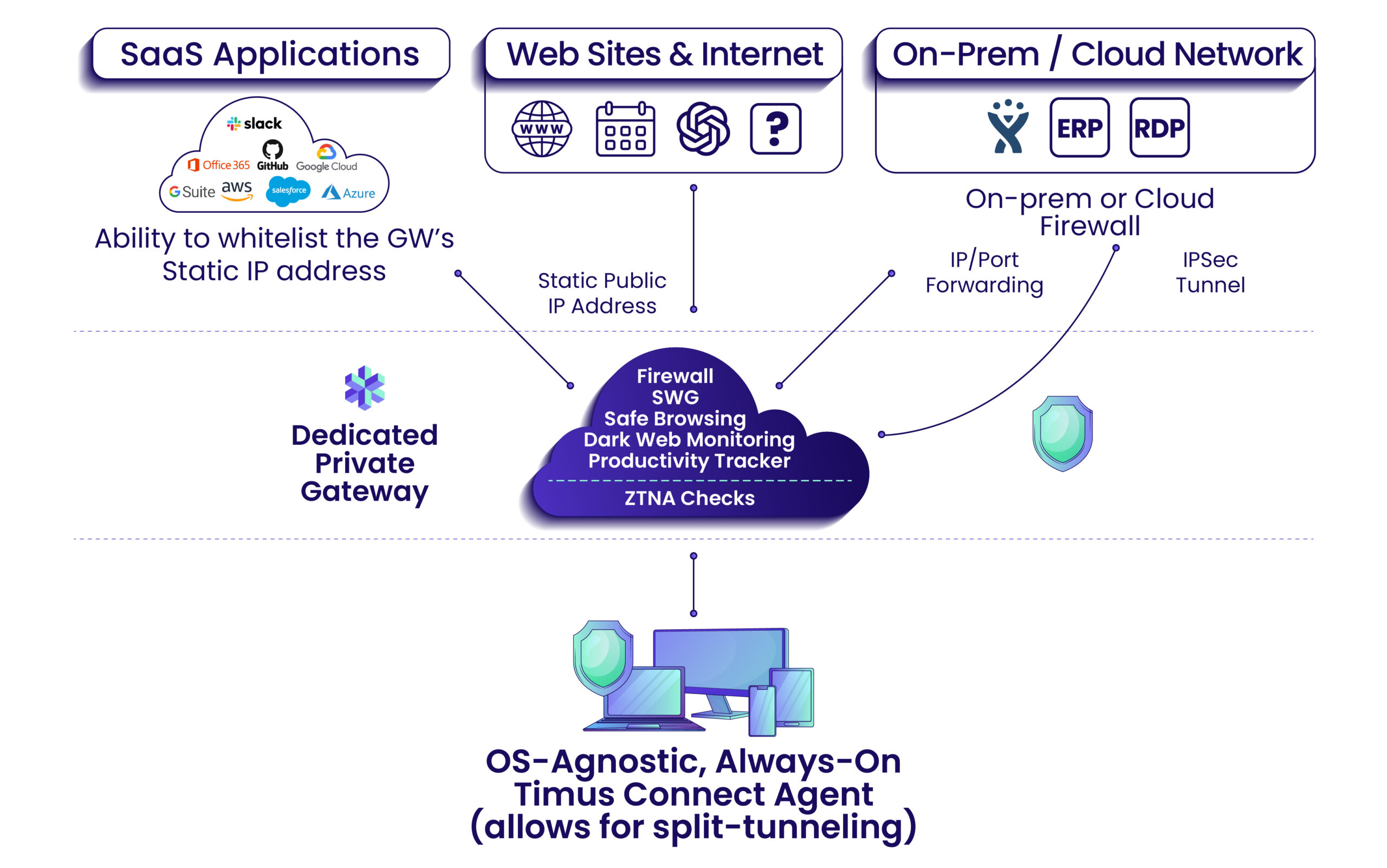

The transition to quantum-safe cryptography will have a significant impact on network security protocols. Protocols like TLS/SSL, SSH, and VPNs rely on public-key cryptography for key exchange and authentication. These protocols will need to be upgraded to support PQC algorithms to remain secure against quantum attacks.

Upgrading these protocols is a complex undertaking. It requires changes to both client and server software, as well as careful consideration of interoperability issues. Ensuring that different systems can communicate securely with each other using PQC algorithms is a major challenge. The process will likely involve negotiation of cryptographic algorithms between communicating parties.

Quantum key distribution (QKD) is another approach to securing network communications in the quantum era. QKD uses the principles of quantum mechanics to distribute encryption keys securely. However, QKD is currently limited by its range and cost. It’s not a drop-in replacement for traditional cryptography, but it may be suitable for specific applications requiring the highest levels of security.

The move to quantum-resistant network protocols isn’t just a technical challenge; it’s also a logistical one. Coordinating upgrades across a large and distributed network can be difficult and time-consuming. A phased rollout, starting with the most critical systems, is the recommended approach.

Long-Term Strategy & Hybrid Approaches

A successful quantum security strategy must be long-term and adaptable. The field of quantum computing is evolving rapidly, and new threats and vulnerabilities may emerge. Organizations need to be prepared to adjust their security posture as the landscape changes. This requires a commitment to ongoing research and development.

Crypto-agility – the ability to quickly and easily switch between different cryptographic algorithms – is a key element of a long-term quantum security strategy. This allows organizations to respond to new threats and take advantage of advancements in PQC. It also reduces the risk of being locked into a single, vulnerable algorithm.

During the transition period, a hybrid approach is recommended. This involves combining traditional cryptography with PQC algorithms. For example, a TLS connection might use both RSA and CRYSTALS-Kyber for key exchange. This provides a degree of protection against quantum attacks while maintaining compatibility with existing systems.

Standardization is an ongoing process. NIST will likely add more algorithms or retire current ones as we learn more about their performance in the wild. We have to keep our systems flexible enough to swap these out as the math evolves.

No comments yet. Be the first to share your thoughts!